While Everyone Is Talking About Data Centers, the Distribution Grid is the Big Opportunity

Post 4 of 10: The Potential Structural Transformation of the U.S. Electric Utility Industry

The national conversation about electricity right now centers on bulk power: gigawatts of new generation, transmission corridors, capacity auctions, and which fuel source wins the decade. That conversation is important but it is also incomplete.

The part that is not getting enough airtime is what happens after the power leaves the substation: the distribution grid. The wires that connect to your home, your office, the hospital around the corner, and the EV charger in the parking garage. The infrastructure that, in most utility service territories, accounts for roughly a third of total capital expenditure and an even larger share of customer-facing reliability failures. In many places, the transmission and distribution costs make up more than 50% of the bill and of that, most of that cost is the distribution grid.

Distribution is where the grid actually touches people. It is also the part of the system that is least understood and most underutilized. If we built the distribution grid from scratch today, we probably would build it differently than the one we inherited given all the technological changes over the past few decades.

---

The Forgotten Asset

Most people in the energy industry think of the grid in two layers: bulk power (generation and transmission) and the distribution system below. That framing undersells how different the two actually are.

Transmission operates under federal jurisdiction (FERC) because it often electrically connects two or more states. (Texas/ERCOT doesn’t, so it falls mostly under state jurisdiction.) It moves large blocks of power across long distances at high voltage. It gets most of the regulatory attention, most of the academic research, and, lately, most of the political press coverage.

Distribution operates under state jurisdiction, PUC by PUC, rate case by rate case. Once voltage is stepped down at a substation, distribution grids move power at lower voltages through a web of feeders, transformers, and laterals to end customers. Distribution grids are typically operated in a radial configuration. Power flows in one direction from the substation out even though most have switching capability that allows for reconfigurations. Some distribution networks, such as those in urban fabrics, are mesh. But regardless, they were all designed in the era before rooftop solar, before battery storage, before millions of EV chargers, and before anyone thought a residential customer might want to sell power back to the grid.

That original design assumption is now wrong. But the legacy infrastructure is still mostly what we have.

---

The Utilization Problem

Here is a number that should be central to every utility capital planning discussion and almost never comes up: the load factor of the distribution system.

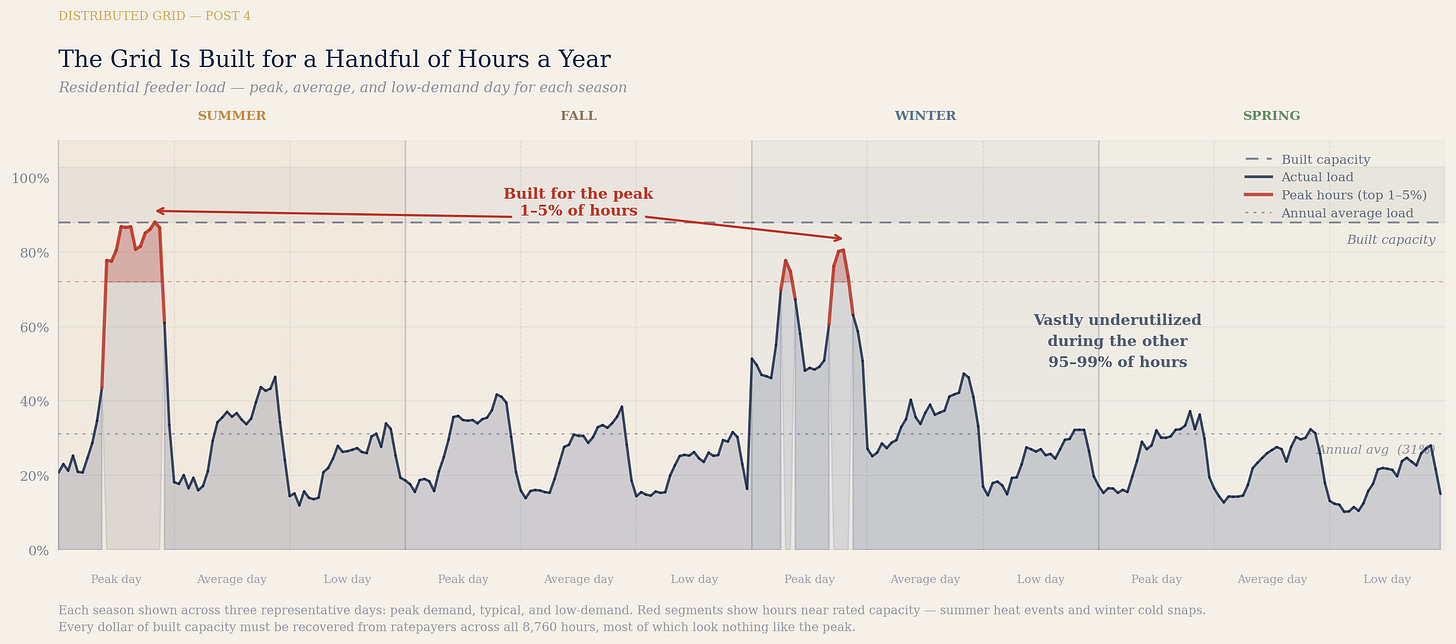

Load factor is the ratio of average load to peak load over a given period. It measures how efficiently a piece of infrastructure is being used relative to what it was built to handle. Think of it as the utilization rate of the installed potential capacity. A system with a 40% load factor is, on average, running at 40% of its rated capacity. The other 60% exists solely to be available during the handful of hours per year when demand peaks.

This wide discrepancy creates the low utilization rates: we need to build for the peak even though many hours are barely using the capacity.

The bulk power system in the United States runs at a system-wide load factor ranging from 43 to 61%, with an average of 53%. Many hours the system sits completely idle and vastly underutilized. Peak demand events occur a few dozen times per year.

The distribution system is worse than the bulk power system. The reason is customer mix.

The industrial customer running three shifts at a steel mill has a load factor approaching 80 to 90%. Their load is large, flat, and predictable. They use the grid constantly and are the best kind of customer for a utility, at least from an asset utilization standpoint.

The residential customer looks nothing like that. According to Department of Energy data cited by the Smart Electric Power Alliance, the average residential load factor in a hot-climate market like Phoenix is approximately 33 to 34%. This is the best case scenario for residential customers since these customers use air conditioning consistently.

In more moderate temperature markets, load factors are likely lower, because air conditioning load, which creates the peak, is used less often, yet the 1 day peak is nearly the same. The local distribution circuit serving a neighborhood of single-family homes is sized for a peak that occurs on a handful of summer afternoons and idles most of the rest of the year.

Note, the analysis is a reasoned inference, not a directly published study. State-level system load factors are not routinely published in a directly comparable format. (Although they should be!) The observations reflect the general direction of available data, not precise figures given a lack of data by utilities.

The states with the lowest electricity prices tend to be states with heavy industrial and commercial load. Texas is the clearest example. According to EIA data, Texas, with less population, consumed twice as much electricity as California in 2023, because of the significantly higher industrial and commercial load. The distribution grid in an industrial service territory runs harder, more consistently, and at higher average utilization than the grid in a territory built around suburban residential and light commercial customers.

The grid (poles and wires portion) is generally a fixed cost system so the more kilowatt-hours sold across the same physical infrastructure means lower costs per kWh for everyone on the grid.

Coastal states are stuck in a negative cost loop: labor and environmental regulations that have pushed manufacturing away, which leads to lower utilization of the grid and thus higher costs per kWh, further pushing more industry and commerce out of the state, reinforcing the high cost loop.

Manufacturing states like Texas are in a favorable cost loop: they have high industrial bases, and have high utilization rates of the grid, creating lower costs per kWh for all customers, attracting more industrial customers, which further reinforce the favorable cost loop.

The grid was built to handle the peak. When building the peak higher is the only thing driving design, you build a lot of copper and steel for a small number of hours. And the utility under today’s regulation is not incentivized to create more efficiency in the system, it’s designed only to exacerbate it by growing the system size.

---

The Capex Explosion That Is Already Happening

Given all of the above, you would hope distribution capital spending to be moderate, focused on maintenance and targeted upgrades. You would be wrong.

Distribution is the single largest capital spending category for U.S. investor-owned utilities. According to EEI data reported by Power Magazine, distribution investment is projected at approximately $66.5 billion in 2025, accounting for roughly one-third of total utility capex, the highest share in more than a decade. According to the Clean Air Task Force, citing Brattle Group data, distribution spending increased by 160% between 2003 and 2023, while peak electricity demand grew negligibly over the same period.

Bank of America Research found that T&D spending grew at an 8.9% compound annual growth rate from 2014 to 2024. U.S. electrical demand over the same period grew at 0.5% per year. Spending on delivery infrastructure is growing 18 times faster than the load it is delivering.

That gap is not primarily a maintenance backlog. It is a consequence of building for a future peak that may or may not materialize on the timeline assumed, in the configuration assumed, at the locations assumed.

And that growth in peak, even if real, could be solved by cheaper means than building out the grid.

Here is the structural logic that produces this outcome, connecting directly back to what Post 1 established about incentives:

A utility with a handful of thermal constraint hours per year faces a choice. It can pay customers to shift load, export power, or correct power factor during those constrained hours. That costs a little bit of money but produces no rate base to earn on.

Or it can file for a capital investment to relieve the constraint. Relatively, that is a very expensive solution but it goes into rate base and earns a return for 30 to 40 years and is very profitable even if the marginal utilization rate of that new capex is less than 5%, and likely less than 1%.

Under cost-of-service regulation, the second (and most expensive) option is financially superior. The constraint event is not a problem to solve cheaply. It is a justification to build more rate base.

The distribution grid is being expanded in part because the regulatory model makes expansion financially attractive, independent of whether expansion is the lowest-cost solution to the underlying constraint. It’s what 100 years ago we decided that we wanted the utility to do at all costs: build more. But that’s not the right model for today.

A customer with rooftop solar and a battery who could help relieve a local constraint for a few hundred dollars per year of compensation (and doesn’t help the utility’s profitability) is competing against a capital project that earns the utility millions in regulated returns.

That is not a fair fight. But it’s the only outcome the current rules allow for. And it’s setting us up for massive inflation on the grid.

WRT data centers: much of what utilities call distribution growth is feeder upgrades, substation reinforcement, and voltage support infrastructure triggered by new large load. The causation argument applies at the distribution layer just as it does at transmission. If the existing system was meeting NERC reliability standards before a hyperscaler filed an interconnection request, the upgrades triggered by that request were caused by the hyperscaler. They belong on the hyperscaler’s balance sheet, not in the rate base that residential and small commercial customers pay into for 40 years. But utilities profit when they get to use their capital (and make every day customers pay for it). So they use the data center to justify this rate base addition. And as I argued in a prior post, the data center, not the rate base, should be on the hook for this cost and utilization risk.

If we don’t let utilities rate base the downstream impacts of hyperscalers, rate bases will moderate. Further, if we remove the capex bias from abnormal ROE, we will further reduce the rate base growth to only the real capex that is needed for maintenance and standard upgrades. Costs will drastically fall out of the system - but the incentives need to change.

---

We’re Flying Blind

There is a deeper problem with the distribution grid that almost never makes it into mainstream energy policy conversations. Most utilities do not know the actual real-time topology of their own distribution systems. (Analogy: imagine using paper maps from AAA in the 90’s instead of Google Maps. In this analogy, utilities would not know for certain which roads are open and closed: it would be based on whatever was the last phone call they received from someone in the field.)

That is not an exaggeration. It is a technical reality.

The bulk power system is extensively monitored. Phasor measurement units, SCADA systems, and energy management systems at the transmission level provide near-real-time visibility into the state of the network. Transmission operators can see voltage, current, power flow, and switching state across their footprints with a high degree of confidence. Even then, utilities do not know the true real-time topology of the transmission grid, but the combination of configurations are more limited than the distribution grid.

Distribution does not have equivalent visibility. Most utilities have SCADA at the substation level. What happens below the substation, on the feeders and laterals, is largely inferred from models and assumptions, not directly observed.

The Advanced Distribution Management System, or ADMS, was supposed to change this. ADMS platforms were designed to integrate outage management, distribution management, and SCADA into a single environment providing real-time situational awareness of the distribution system. Billions of dollars have been spent deploying them but they have not solved the problem.

NREL’s ADMS Test Bed research found that current systems cannot obtain actionable real-time data across the feeder network. The reason is structural: SCADA sensors are installed at substations, but the feeder below serves hundreds or thousands of nodes with no equivalent instrumentation between them. It is not a distance problem. It is a sensor density problem because there are hundreds or thousands of nodes attached to each feeder line that have an unknown network configuration. And AMI 2.0 is not built to fix this (more on that in a later post).

The result is that every utility I have spoken with in the course of this research knows what its network configuration was when it was last switched (assuming the person on the ground called it in correctly) but they don’t know what the topology actually is right now. Switching orders may be stale. A fuse may have been blown. Field changes may be undocumented. Faults may have altered topology without triggering an alarm. A distribution system serving hundreds of thousands of customers exists in a persistent state of weak observability.

This matters enormously for what comes next in this series. Fault Location, Isolation, and Service Restoration (FLISR) relies on knowing the topology so it can identify which switching actions are safe and which configurations will restore power to isolated customers. Distributed Energy Resource Management Systems (DERMS) rely on knowing the topology so they can calculate how a solar export or a battery discharge will change voltage and current conditions on the feeder. If the topology is unknown, neither system can do what it promises and these are very imperfect solutions. You are not optimizing the network. You are issuing commands to a network whose state you do not fully know.

I will unpack what this means for distributed energy resources specifically in the next post. The short version here: be skeptical of claims about the value of DERMS and virtual power plants until the utility modernizes. The foundational data layer is not yet in place to use these devices to their fullest. And the financial incentives of today’s cost-of-service regulation provide no pressure to fix it.

---

What Peaks Look Like When Topology Is Unknown

The combination of spiky utilization and unknown topology produces a predictable outcome: conservative, capacity-biased engineering.

When you know your network, you can operate it closer to its limits because you know where those limits are. When you do not, you build margin into the design. And under today’s rules, that margin is also very profitable. You oversize conductors and transformers for the peak you can imagine, then add more because a misconfiguration could create substantially higher loading with no real-time visibility to catch it. In a heavily residential service territory with load factors at 35% or below, distribution infrastructure is already being built for peaks that are multiples of average load. Add topology uncertainty and an engineering culture trained to avoid failures at all cost, and you have a system sized for a peak further above the observed average than the physics requires.

That conservatism is not irrational given the information available to the engineers making the decisions about reliability. It is the correct response to uncertainty using the one tool the utility is allowed to use profitably: build more system.

The industry recognized the topology problem and made a genuine attempt to solve it. Utilities invested billions in ADMS. That investment was real. But ADMS has a structural ceiling software alone cannot overcome. The sensors live at substations. The feeder network below has no equivalent instrumentation. ADMS optimizes a generic network model, not the actual network. The gap between what the model says and what the network is remains -- and diverges more each day. If there is a utility that genuinely believes its ADMS rollout was successful, I genuinely want to speak with their operations teams. Most PUCs have not yet absorbed that these initiatives fell short, partly because utilities have little incentive to report back on past programs. The conclusion the regulatory model points toward is more iron, not a different class of technology.

The deepest problem is structural. Under COSR, every dollar spent developing the next iteration of sensing and network intelligence competes against a capital project that earns a regulated return for 30 to 40 years. Technology that improves network intelligence enables the utility to build less. Building less reduces rate base. The technology iteration curve is being starved by the earnings model. It is not that utilities are opposed to innovation. It is that the financial structure makes innovation self-defeating. A utility that solves real-time topology awareness has just created the capability to justify building significantly less infrastructure. While that is good for customers, that is not a good outcome under cost-of-service regulation. The incentive to rapidly reach the next iteration simply does not exist.

This will not change until the earnings model changes. A utility earning performance-based returns tied to network efficiency has a genuine financial reason to fund the next sensing iteration that a COSR utility never will. That is the setup for what comes later in this series.

---

The Platform That Is Already There

Utilities are actually platform companies. And they are the ultimate network company.

The distribution grid connects 100% of all supply and 100% of all demand in its service territory.

That coverage is extraordinary. No technology company has 100% of the customer base as a government protected monopoly. The distribution utility does.

That asset is being treated as a passive delivery network when it is actually the infrastructure layer for a platform business. And utilities are too focused on their cost of service ratebase mechanics to realize there is way more value if they shifted to be a platform company.

This shift means they should create an active distribution grid, one with real-time topology awareness, distributed energy resource coordination, and granular metering. And this is fundamentally different from a traditional wire infrastructure company. It becomes the operating system for the energy layer of the built environment. Every home with rooftop solar is a potential generator. Every battery is potential storage. Every EV is potential flexible buyer and seller. Every commercial building with flexible HVAC is a potential frequency responder. The value of coordinating those resources across the existing network is enormous. And deflationary for customers.

The taxi industry also had geographic coverage. It had local knowledge, licensing infrastructure, and customer relationships. It treated those assets as a dispatch business. They built their fleet to serve the peak hours of Superbowl Sunday.

Uber reconceived the business as a platform and instead of building to peak, they turned their customers into suppliers while also providing price signals to shift consumption. The distribution utility has better coverage, deeper physical integration, and a more defensible regulatory position than any analog from technology. But as I’ll describe in a further post, the current incentives, investors, culture, processes, and teams are stuck in the past and not the right pieces for this new future.

From a technology perspective, reconception requires three things that cost-of-service regulation actively resists: real-time topology knowledge, advanced metering infrastructure that can measure what distributed resources are contributing, and a financial structure that rewards coordinating the network rather than expanding it. I will work through all of these in various posts in this substack series.

The grid is already there. The organizations are not.

---

What the Distribution Capex Story Actually Tells Us

Put the pieces together and the distribution grid looks like this:

A system with 30 to 40% average utilization in residential service territories, built for a peak that occurs a handful of days per year: and of those days, only a handful of hours

Capital spending at record levels, growing nearly 18 times faster than demand over the past decade, now the largest single capex category in the utility sector

A topology that most utilities cannot observe in real time, producing an engineering culture that defaults to capital rather than technology to manage risk

Financial incentives under cost-of-service regulation that reward every one of those dynamics: low utilization, high peaks, uncertain topology, and continuous capital deployment

And at the heart of all the recent capex growth, a set of new large load customers, primarily data centers, whose infrastructure costs are being spread across residential ratepayers rather than charged to the parties who caused them

This is not a story about bad management. Every individual decision in this chain is financially rational given the current incentive structure. The utility that defers a capital investment to pay customers for demand flexibility is leaving money on the table under the current rules, and they need to operate as fiduciaries for their shareholders’ interests, not customer interests. These used to be aligned but now they are not.

The problem is not the people. The problem is that all of their rational decisions, aggregated across the sector, over tens of billions of dollars, are producing a grid that is grossly over-capitalized relative to what intelligent management of existing assets would require. That over-capitalization is paid for by ratepayers as ever increasing bills. And the distribution grid, the most intimate layer of the system, the one that connects to every customer, is both the primary site of that over-capitalization and the most underutilized asset available for transformation. And the biggest opportunity for a rethink.

In the next post, I will take up the distributed energy resource question directly: what DERs are actually capable of, why they cannot deliver their full value with today’s grid, and what their real technical barriers are, not just the regulatory ones.

Utility Transformation Series, Post 4 of 10. Next: The Flexibility Revolution Is Real. The Infrastructure Isn’t Ready.

---

Sources

EEI industry capital expenditures by function, 2025 projections: eia.gov / EEI Financial Analysis and Business Analytics

Power Magazine, “Investor-Owned Utilities to Spend $1.1T in Grid Boost,” October 2025: powermag.com

Clean Air Task Force / Brattle Group, distribution spending 160% increase 2003-2023, July 2025: catf.us

Bank of America Institute, “Power Check: Watt’s Going On With The Grid?” 2025 (T&D capex 8.9% CAGR vs. 0.5% demand CAGR 2014-2024): institute.bankofamerica.com/content/dam/transformation/us-electrical-grid.pdf

SEPA (Smart Electric Power Alliance), residential load factor Phoenix AZ approximately 33-34%, citing DOE data: sepapower.org

EIA, Texas electricity consumption vs. California, 2023: eia.gov

National Renewable Energy Laboratory, ADMS Test Bed research notes: nrel.gov

Tyler H. Norris et al., Duke University Nicholas Institute, “Rethinking Load Growth,” February 2025, presentation to ISO-NE Consumer Liaison Group, March 2025 (load factor 43-61%, avg. 53%): iso-ne.com/static-assets/documents/100021/march_clg_meeting_speaker_norris_03_27_25.pdf

Grid Strategies, National Load Growth Report 2025: gridstrategiesllc.com

S&P Global Market Intelligence, utility capex projections 2025-2029: spglobal.com

Assumption note: State-level system load factors used in the Texas vs. coastal states comparison are a reasoned inference from available data on customer mix, industrial load concentration, and average electricity prices. A directly comparable published dataset of distribution-level load factors by state does not exist in the public record. The directional argument is consistent with available EIA sales and peak demand data, but readers should treat the specific load factor comparisons as illustrative rather than precisely sourced.

Posts in this series:

It’s about time to deployment. DG can do so much more with small regulatory tweaks. What if every industrial backup gen could run just 100 hrs a year (diesel and nat gas). Net metering for all. The short term is the challenge

really impressive explanation of what’s going on at the distribution layer and how to improve it. thanks for writing!